Attention in Long Short-Term Memory Recurrent Neural Networks - MachineLearningMastery.com

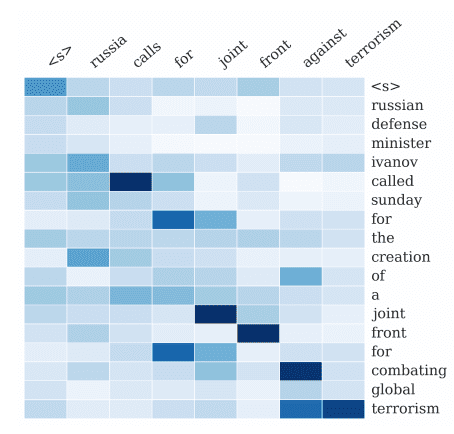

The Encoder-Decoder architecture is popular because it has demonstrated state-of-the-art results across a range of domains. A limitation of the architecture is that it encodes the input sequence to...

Source: MachineLearningMastery.com

The Encoder-Decoder architecture is popular because it has demonstrated state-of-the-art results across a range of domains. A limitation of the architecture is that it encodes the input sequence to a fixed length internal representation. This imposes limits on the length of input sequences that can be reasonably learned and results in worse performance for very […]